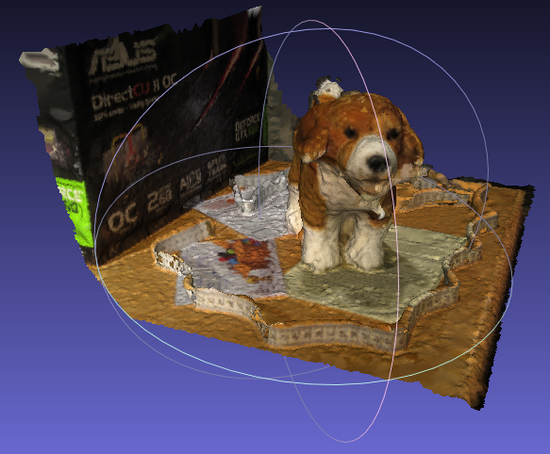

That said, there is no need to apply a correction to the lense distortion at this time : VisualSFM will allow PMVS to manage it laterly once the cameras parameters are guessed. That should increase the number of matched features amongst the photographs set. in Adobe Lightroom, Develop \ Activate the profil correction) before exporting the JPEGs. If possible, the chromatic aberration should be corrected (i.The original or raw photos should be exported to JPEG (best quality) downscaled to 3200 pixels (see the VisualSFM documentation for an explanation).The dense pointcloud reconstructed and displayed in VisualSFM It is to be mentionned also that the software may crash down during an overnight calculation, so whenever possible one has to check if the process is still running).įigure 5.

The software will guess automatically how to resume the process. Therefore it is possible to kill at anytime the pairwise matching process by closing the VisualSFM windows, then launching the software again, loading all the images needed and then resuming the matching process by clicking again the relevant icon. : This may take a while, especially with a huge collection of photographs. SfM \ Pairwise Matching \ Compute Missing Match or ( N.B.A good work around is to load step by step the photographs by smaller sets). : Sometimes, with a huge amount of photographs, the software will encounter some difficulties and will fail to load any of the files. This part is straightforward and consists in following the steps mentioned in the front page of the VisualSFM website. Production of the sparse and dense pointclouds : This experimentation is for me a side project and its outcome will definitively be profitable to my main project, the Archaeological Map of the Western Thebes, that I will present hereby soon.ġ. The pointcloud and the mesh were displayed and edited with Meshlab (version 1.3.2), developed at the Visual Computing Lab of the ISTI-CNR / University of Pisa (Italy), and we are currently exchanging with Matteo Dellepiane about the implementation of an automatical approach of the processes described below.įor a whole background of this project, please have a look to « A Decade of Digital Documentation at the Ramesseum », available on the INSIGHT website. The dense reconstruction is processed with CMVS/ PMVS developped by Yasutaka Furukawa, University of Washington, and Jean Ponce, École Normale Supérieure. The Structure from Motion toolkit used is VisualSFM (version 0.5.20), developped by Changchang Wu of the University of Washington at Seattle. Ground truth mesh from scanned pointcloud. We decided to proceed to an experimentation on the Coronation Scene of the wall located in the Second Court of the Temple of Ramses II.įigure 2. The final idea was to define a fully automatic process to be applied to the whole monument, but for some reasons it wasn’t possible to achieve that point yet know, so we finally managed to propose a partially manual approach which is conceivable to implement. The aim was to benefit from the geometric accuracy of the last one, and from the texture information conveyed by the first one, in order to obtain an accurate 3D textured model that may be used as a trustfully document supporting the epigraphic survey process of the hieroglyphic inscriptions of the temple of Ramses II in Western Thebes (production of orthophotographs from any kind of architectonical components, such as columns, lintels, walls, etc.). Philippe Martinez, egyptologist and epigraphist, and Kevin Cain, founder and director of INSIGHT, at the implementation of a pipeline involving pointcloud data extraction derived from both Structure from Motion (SfM) and ground truth scanned data. Christian Leblanc, I have been working with Dr. A case study : the epigraphic survey at the RamesseumĪs a member of the French Archaeological Mission to Western Thebes ( MAFTO) leaded by Dr.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed